Neural Beam 935491424 Apex Node

The Neural Beam 935491424 Apex Node integrates beamforming, neural encoding, and spatial filtering into a modular, latency-focused platform. It emphasizes deterministic scheduling, prioritized queues, and minimal buffering to maintain predictable response times at scale. The architecture relies on parallelism and resource partitioning, with energy-aware fault-tolerant orchestration across heterogeneous environments. Its modular cores and QoS-aware interconnects enable edge analytics, yet practical deployment raises questions about real-world constraints and performance under varied workloads.

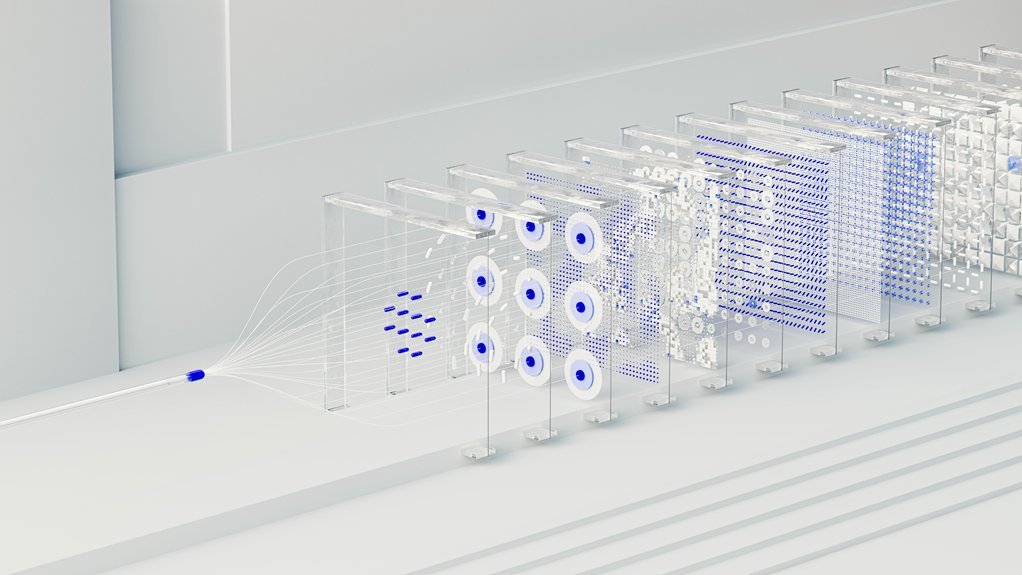

How the Neural Beam 935491424 Apex Node Works

The Neural Beam 935491424 Apex Node processes input signals through a multi-stage pipeline that integrates beamforming, neural encoding, and spatial filtering. It characterizes a neural beam using directed sensor arrays, mapping signals to concise representations within the apex node. The design prioritizes low latency and scale, enabling efficient data flow, deterministic processing, and robust performance at varying loads.

Why This Node Delivers Low Latency at Scale

What enables the Neural Beam 935491424 Apex Node to sustain low latency at scale is its tightly engineered data path, which minimizes buffering and serialization overhead while maximizing parallelism across its multi-stage pipeline.

Latency optimization drives design choices, with deterministic scheduling and prioritized queues.

Scalability strategies ensure consistent performance under load, balancing throughput, backpressure control, and resource partitioning for predictable response times.

Key Accelerators and Architecture Inside the Apex Node

Key accelerators within the Apex Node are engineered to sustain low-latency performance at scale by combining specialized compute units, memory hierarchies, and deterministic control logic.

The architecture emphasizes modular cores, bandwidth-aware interconnects, and load-aware scheduling for fast prototyping and rapid iteration.

Energy efficiency, continuous deployment, and fault tolerance are baked into accelerator orchestration and error containment mechanisms.

Real-World Use Cases and Performance Scenarios

Real-world deployments of the Apex Node span autonomous systems, edge analytics, and streaming inference workloads, where deterministic latency and scalable throughput are critical. The system demonstrates neural focus under constrained resources, enabling predictable behavior across heterogeneous environments.

Performance scaling remains the core objective, with modular acceleration and load-aware orchestration, ensuring consistent quality of service while empowering operators to pursue freedom through efficient, reliable inference.

Conclusion

The Neural Beam 935491424 Apex Node stands as a precision instrument, a loom weaving beams, nets, and filters into a coherent signal tapestry. Its architecture unfolds like a tightly choreographed ballet of cores and queues, each beat delivering deterministic latency under pressure. In operation, data streams glow through bandwidth-aware paths, collapsing complex transforms into crisp, actionable representations. For edge analytics, it is the steady heartbeat guiding scalable, real-time insight with unwavering reliability.